Over the past year, we’ve seen a wave of AI assistants enter marketing measurement. Open your LinkedIn feed on any given Tuesday and there’s a new one. “Introducing our AI assistant.” “Meet our copilot.” “Ask your data anything.”

I’ve looked at most of them. Here’s the pattern I keep seeing.

You type “what happened to my Meta ROAS last week?” The agent gives you a chart and a very confident answer. The demo is impressive. You leave the meeting nodding. Then the quarter ends and the numbers don’t move. Because the agent answered your question – but it didn’t change your decision.

The industry is confusing speed with progress. But faster answers to the wrong questions don’t move the business. And faster actions based on biased data make things worse.

That’s not what we built. Let me explain what we did build, and why.

Two problems, not one

When we started designing MIA, we weren’t starting from a technology brief. We were starting from frustration – a very specific pattern we kept seeing in every customer conversation, in every vertical, at every budget level.

The first problem is the measurement-to-action gap.

Picture this. A brand has best-in-class measurement. Proper MMM, incrementality testing, causal attribution. Measurement that would hold up to CFO scrutiny. And then what happens? That measurement sits in a dashboard. Budget decisions still happen in Excel, six weeks later, by a committee that has already forgotten what the model said. The insight ages. The media market moves on. By the time anyone acts, the decision is already sub-optimal.

The industry spent a decade solving the measurement problem. More Bayesian models. More granular attribution. More frequently refreshed MMMs. And somehow, despite all of that investment in insight generation, the gap between “the model told us what to do” and “we actually did it” stayed broken.

Because the bridge across that gap was always a human. And humans work in silos. They need to coordinate across teams. They’re slow – not because they’re lazy, but because the process requires approvals, revisions, and meetings that were designed for a world that moves quarterly, not daily.

The second problem is insight fatigue compounding into decision paralysis.

Modern measurement platforms generate a lot of signals. When your MMM refreshes weekly, your incrementality tests run continuously, and your attribution model updates daily – you’re not short on data. You’re drowning in it. When everything is flagged as important, nothing is actionable.

Your team spends Monday morning triaging alerts instead of making decisions. By Wednesday, new data has arrived that contradicts Monday’s priorities. By Friday, the weekly review is a rehash of things everyone already knew but nobody acted on.

These two problems – the gap between insight and action, and the paralysis of too much signal – are what MIA was designed to solve. Not one or the other. Both.

And we gave ourselves a specific north star metric to keep us honest: time to first finance-trusted decision packet. Not time to first chat message. Not dashboard views. Not number of agent conversations. The singular test was: how quickly can a customer go from noisy data and competing methodologies to one defended, finance-ready decision they can take to the CFO?

That metric shaped every product choice we made – because it forced us to build for action, not for insight generation.

Why most AI agents won’t fix this

So here’s the obvious question: can’t AI close these gaps?

In theory, yes. In practice, almost nobody is doing it right.

There is an enormous amount of AI-washing in this space right now. And I say that as someone building in this space. Vendors are taking a BI tool, putting a chat interface on top of it, and calling it an agentic marketing intelligence platform.

Here’s the uncomfortable truth the industry isn’t talking about: an agent is only as good as the signal it acts on.

And most of the signals powering today’s marketing AI agents are built on correlation, not causation. Platform-reported attribution. Last-touch models. Multi-touch models that assign credit based on who touched the customer, not who actually influenced the sale.

This data is biased. Platforms design their attribution to make themselves look good – that’s not a conspiracy, it’s a business model. Meta wants to show you that Meta works. Google wants to show you that Google works. They both claim credit for the same conversions. They can’t both be right.

Now build an AI agent on top of that.

You get an agent that confidently recommends you increase Meta spend because Meta’s attribution says Meta is crushing it. The recommendation is instant. The confidence is high. The direction is wrong.

I think about it like giving a GPS to someone on the wrong highway. The GPS recalculates faster. The ETA is more accurate. The route is more efficient. But you’re still heading to the wrong city.

Speed is not an advantage when the direction is wrong. And that’s the fundamental problem with most marketing AI right now. They’re solving for speed when the real problem is direction.

What “causal” actually changes

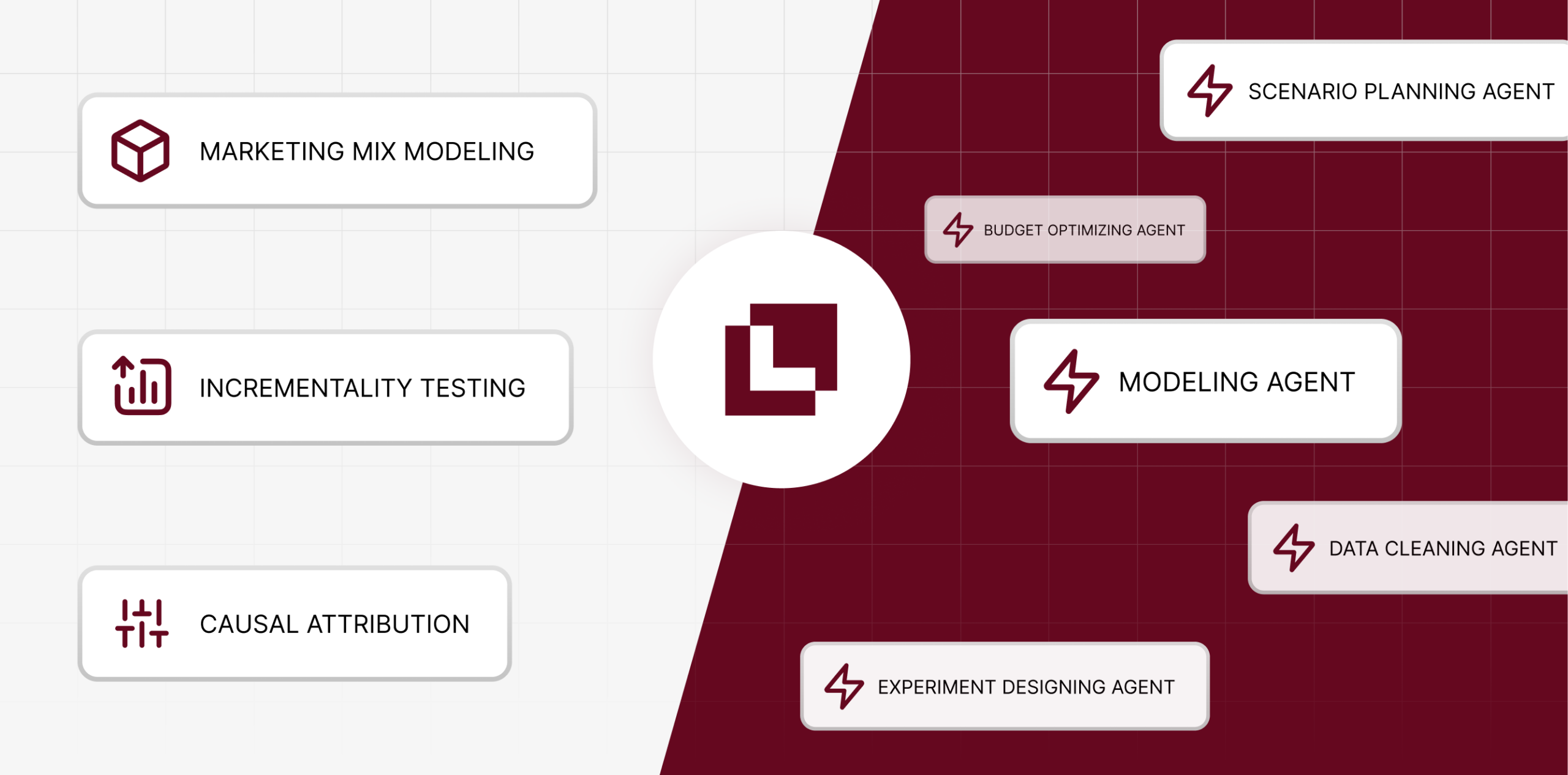

At Lifesight, we’ve spent seven years building a unified causal measurement engine. Three methodologies – causal marketing mix modeling, geo-based incrementality testing, and incrementality-calibrated attribution – working together in one system.

I want to explain why this matters for AI agents specifically, because it’s the whole reason MIA exists.

Most measurement tools give you one lens. MMM tells you the strategic picture – which channels to invest in at a high level. Incrementality testing tells you what’s real – through controlled experiments that prove causation. Attribution tells you the granular picture – which campaigns and creatives are performing day to day.

These three lenses often disagree. Your MMM says brand spend is working. Your attribution model says it’s not. Your incrementality test contradicts what your MMM predicted. Every CMO I’ve spoken to has lived through this.

In our engine, these three don’t just coexist – they calibrate each other continuously. Incrementality tests validate what the MMM predicts. The MMM informs where to run the next experiment. Attribution models get recalibrated based on experimental results. The system gets sharper over time because each methodology stress-tests the others.

Now imagine an AI agent reasoning from that foundation.

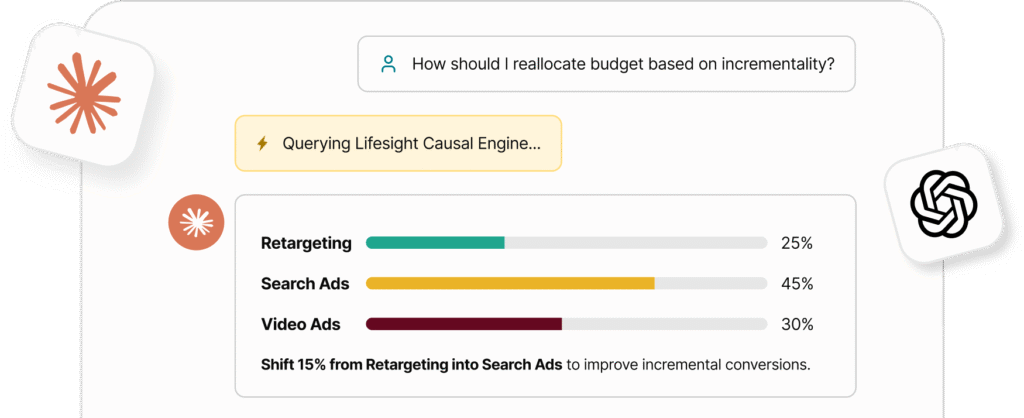

When MIA recommends shifting $100K from paid search to CTV, it’s not pulling a number from one dashboard. The geo-lift tests validated that CTV is driving real incremental sales. The MMM confirms the channel’s strategic fit within the broader media mix. The calibrated attribution shows the signal is consistent down to the campaign and ad set level. Three methods arrived at the same conclusion independently.

That’s not a suggestion. That’s a recommendation with causal evidence attached. The kind you can put in front of a CFO without six weeks of preparation.

This isn’t a feature difference from other agents. It’s an architectural difference. And it’s the difference between automating a bad decision faster and making a genuinely better one.

From assistant to ambient decision system

Most people hear “AI agent” and picture a chatbot where you ask questions. That’s stage one. Useful, but limited.

I think about MIA’s architecture as a five-stage evolution – from reactive assistant to what I call an ambient decision system. And I use the word “ambient” deliberately. The end state isn’t a tool you open and use. It’s a system that’s always on, always watching, always thinking about your business.

Stage 1: Ask. MIA answers questions on demand. But not the simple kind. You can have a genuine analytical conversation – not just “what was my ROAS last week” but “why is my CAC rising in the US while it’s flat in APAC?” And because MIA reasons from the causal engine – not a platform data feed – it can surface MMM coefficients, incrementality test results, and attribution overlap, then synthesize them into one explanation that holds up under scrutiny.

Stage 2: Watch. MIA stops waiting for your questions and starts monitoring continuously. It subscribes to business events – a data refresh completes, a forecast misses plan by a threshold, a channel’s incremental ROAS shifts materially, an experiment concludes. When any of those fire, MIA surfaces what matters. You stop triaging alerts. MIA does it for you.

Stage 3: Advise. MIA doesn’t just tell you what changed. It creates a structured decision packet: the options, the expected impact of each, the causal evidence, the confidence intervals, the rollback plan. Everything the CMO and CFO need to make a decision – assembled in seconds, not days. This is where insight fatigue dies. Instead of a hundred signals, you get one recommendation with the evidence attached.

Stage 4: Act. Once you give the approval, MIA executes. Within the guardrails you’ve configured, it can reallocate budget across channels, launch geo-incrementality experiments, update campaign parameters, and sync decisions to your ad platforms and BI tools. The six-week cycle becomes a same-day cycle. Not because we skipped the rigor. Because the rigor is built into the engine.

Stage 5: Learn. This is where the system compounds in value. MIA compares its predictions against actual outcomes. It tracks where its recommendations were right and wrong. It uses that feedback to make the next recommendation sharper. Every quarter of closed-loop causal data makes the MMM more precise, the recommendations more confident, and the guardrails better calibrated.

This is the part I find genuinely exciting. It’s a system that gets smarter the longer you use it. The model accuracy improves. The recommendations get more specific. The confidence intervals tighten. It’s more like a relationship than a subscription.

The guardrails question

Every time we present MIA, someone asks: “But do I still have control?“

The honest answer: you have more control than you do now.

Right now, “control” means a manual process where a human reviews every budget decision. In practice, that means decisions are slow, biased by whoever built the deck, and limited by how many things one person can pay attention to simultaneously.

With MIA, you define the guardrails. Budget caps. Channel constraints. Approval thresholds – auto-execute below a certain amount, require human approval above it. Strategic priorities that MIA respects.

Below your threshold, MIA acts and logs everything. Above it, MIA proposes the action, shows you the causal evidence, and waits for your click. Every action is auditable. Every decision is reversible.

You don’t lose control. You stop pretending that a six-week manual process is “control.” It’s not control. It’s delay.

What this means for marketing teams

The next generation of marketing teams won’t be bigger. They’ll be smaller – and significantly more effective.

Right now, a typical mid-market marketing team has five to seven people whose primary job is turning measurement into decisions. Data analysis. Deck building. Report formatting. Experiment monitoring. Approval coordination.

MIA handles 80% of that operational work. Not the strategy. Not the creative thinking. Not the “should we enter this market” conversations. The operational measurement work that currently fills calendars and burns out analysts.

What changes isn’t headcount. It’s how the team spends its time. Less time in spreadsheets, more time on the work that actually requires a human brain – strategy, creative, customer understanding, competitive judgment.

And because the system learns, the gap between what MIA handles and what requires a human keeps shifting in your favor. Every quarter, MIA gets smarter. Every quarter, your team gets more leverage.

The bottom line

Every other vendor gave their agents a dashboard to read. We gave ours a causal engine to think with.

If you want to see what that looks like in practice – on your data, with your channels, against your actual measurement – book a demo. We’ll show you MIA live.

And if you’re not ready for that yet, let’s start with a free evaluation and audit. We’ll show you where the measurement-to-action gap is costing you the most – and what would change if you closed it.

You may also like

Essential resources for your success